Waymo's World Model, GPT-5.3 Codex, Opus 4.6 | 0032

Self-steering AI, steering agent teams, and AI steers cars.

As a reminder, Intent is all about helping talent in tech become more intentional with their career by staying informed, fluent, and aware of what’s going on in and around the industry. Thanks for sticking with us!

This is our first issue after transitioning to Substack! Mind helping us warm up this new sender email? Hit reply and tell us what AI app you use the most! We’ll publish anonymized data from the many thousands of you in a few weeks. :)

Today’s agenda:

Waymo shows us why World Models will define the future.

OpenAI and Anthropic released new models yesterday. TLDR?

Quick hit links to fuel your weekend reading.

Waymo is training itself in simulated worlds

If you’ve been reading Intent for any extended period of time, you know we’re fans of world models (like Google’s Genie 3) and the ability of embodied agents (like Google’s SIMA 2) to “practice” inside of them.

The more robust the world model, the more accurate its simulation of physics and real-world dynamics — which means that embodied agents inside these simulated environments are basically creating synthetic training data about what it’s like to interact with the real world.

And now, we’ve got a really fantastic example of how this all comes together —

Today, Waymo announced details about the Waymo World Model, built upon Genie 3.

Why this matters: most training data and driver simulation that goes into self-driving requires real-world training data — typically, previously recorded footage or new footage captured by cameras and sensors

The black swan problem: this means that the models can’t explore or train on what they haven’t seen in past data — imagine rare weather events or one-off incidents (in Google’s post, they point to the example of an elephant on the road)

The fidelity gap: some footage is less usable, ex. dashcams or mobile devices, when compared to the multi-capture, multi-angle training data that Google’s latest camera and lidar setups can capture

AI combo breaker: using the Waymo World Model, researchers can do things like:

simulate long-tail unlikely scenarios, like an elephant on a city street

using feedback from humans or past Waymo data, reconstruct alternative paths the Waymo Driver model could’ve taken to optimize its routing

generate realistic 3D and 4D data that might be missing from older footage or from incomplete data (ex. narrow dashcam footage becomes wide-angle, clearer, and is enriched with simulated lidar)

It’s in the world model’s capability of generating accurate and robust simulation that the team is able to architect scenarios and simulations with prompts and with steering that allow their world model to improve faster than it could if all it could rely on was data from the real world.

As Google put it: “By simulating the ‘impossible’, we proactively prepare the Waymo Driver for some of the most rare and complex scenarios. This creates a more rigorous safety benchmark, ensuring the Waymo Driver can navigate long-tail challenges long before it encounters them in the real world.”

We live in exciting times.

(btw, before we steer into the next topic — context engineering has become the key skill to getting outlier-excellent outcomes from AI — join Sherveen for a 30m workshop on Mon, Feb 9 — sign up here)

You don’t need to try them, but you should care

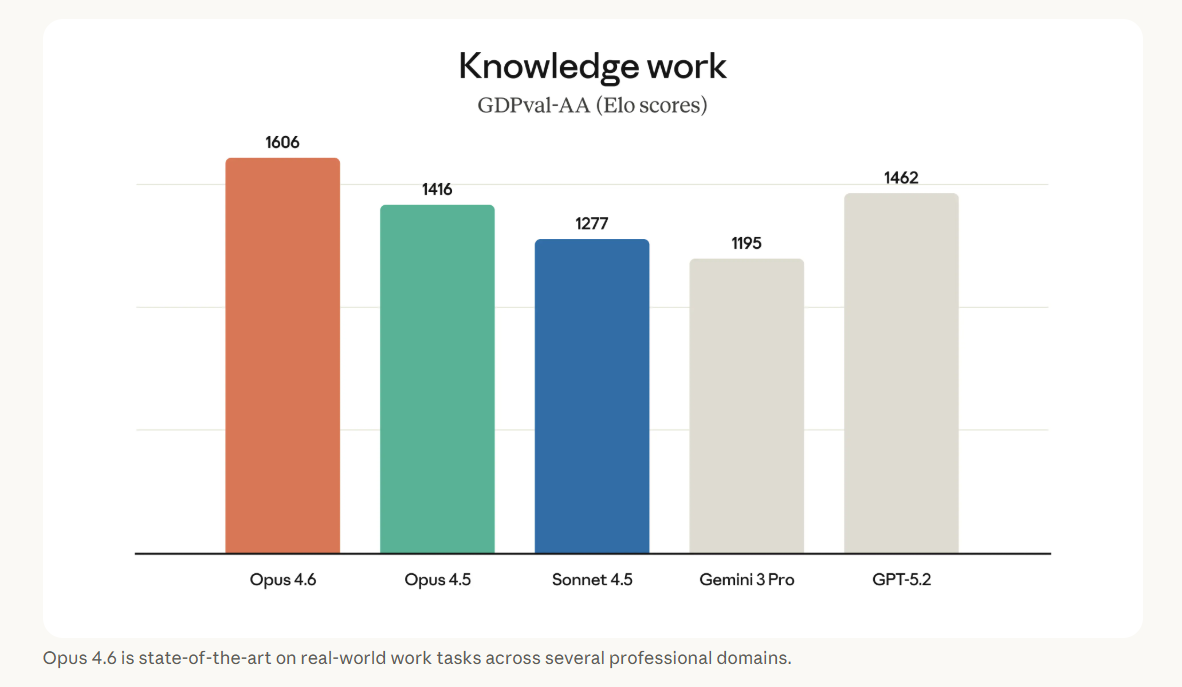

Yesterday, Anthropic released Opus 4.6 and OpenAI released GPT-5.3-Codex. Both models look like incremental innovations (to Opus 4.5 and GPT-5.2-Codex), but there’s more than meets the eye to these updates.

These models, in contrast to mainline Claude models (Sonnet) and mainline GPT models, are particularly focused on code and agentic behavior. In other words, they’re not necessarily going to wow you with incremental IQ, but based on benchmarks and real-world tests so far, they’re far better at interfacing with and acting upon the real world than their predecessors.

As we’ve been talking about for the past year, we now live in a world where agentic harnesses that ‘surround’ the models (think: Claude Code, Manus, OpenClaw) have dramatically improved and are a key part of AI progress.

And in that world, the core models improving incrementally can still see dramatic gains when paired with the right harness.

Opus 4.6 is a good example of this: while it’s only moderately better than Opus 4.5 on key coding benchmarks, it’s…

23.9% better on agentic web search (source)

8.6% better at financial analysis

13.4% better on doing valuable tasks in Office

part of new Claude in Excel and Claude in PowerPoint updates

part of Claude Code’s new Agent Teams update

Then, there’s GPT-5.3-Codex, which has SOTA scores on Terminal-Bench (20.8% better than 5-2-Codex) and SWE-Bench Pro. It continues to be the preferred model amongst AI code power users over Opus 4.6 in most cases, and we agree.

Even more interesting is that OpenAI confirmed what its staff had been hinting on X for weeks: their product and engineering teams are increasingly relying on Codex to help build these new models. Here are some choice quotes:

“The engineering team used Codex to optimize and adapt the harness for GPT‑5.3-Codex. When we started seeing strange edge cases impacting users, team members used Codex to identify context rendering bugs, and root cause low cache hit rates. GPT‑5.3-Codex is continuing to help the team throughout the launch by dynamically scaling GPU clusters to adjust to traffic surges and keeping latency stable.”

“A data scientist on the team worked with GPT‑5.3-Codex to build new data pipelines and visualize the results much more richly than our standard dashboarding tools enabled. The results were co-analyzed with Codex, which concisely summarized key insights over thousands of data points in under three minutes.”

We live in such exciting times!

Quick links

Arcada Labs has a variety of AI models duking it out in running their own X accounts and seeing which one can generate the most engaged audience.

There are a lot of ‘self-driving’ AI agents running around now, like Clawdbots, and now those AI agents can hire a human for tasks they need done in the “meatspace” on RentAHuman (yes, really).

Want to use AI to build slides better, faster, sexier? If you think the current AI products don’t hit the mark, consider using Claude Code with a simple frontend.

Think a friend could use a dose of Intent? Forward this along – inbox envy is real.

Sent with Intent,

By Free Agency